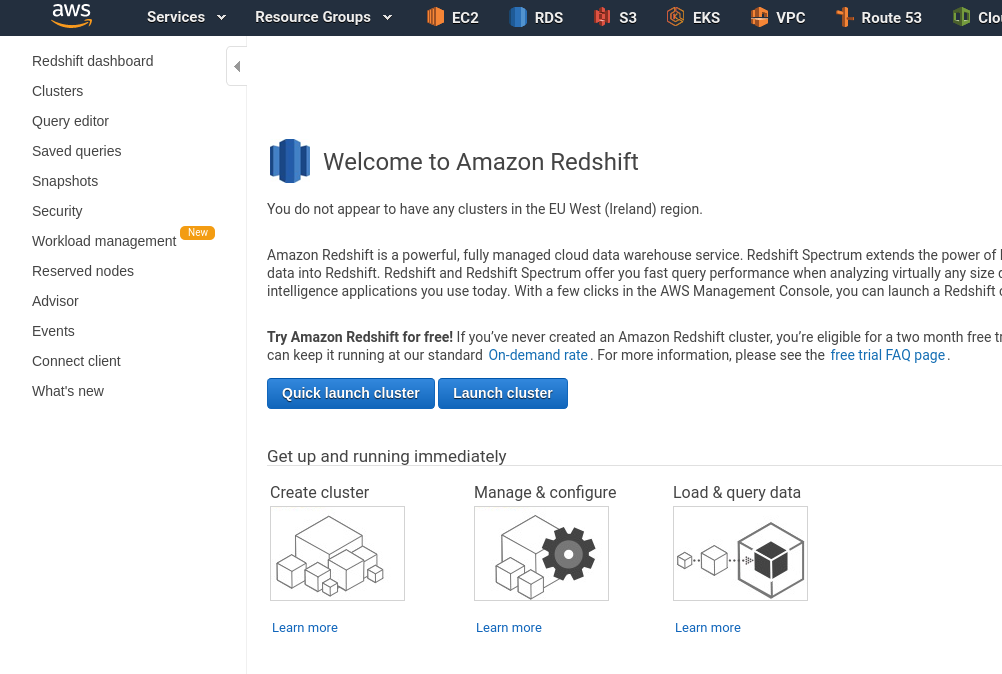

For external feature groups, only metadata for features is stored in the feature store - not the actual feature data which is read from the external database/object-store. For cached feature groups, the features are stored in Hopsworks feature store. Hopsworks supports the creation of (a) cached feature groups and (b) external (on-demand) feature groups. Step 2- Define an external (on-demand) Feature Group You then upload the driver files to the “Resources” dataset in your project, see the screenshot below.īefore starting the JupyterLab server, add the Redshift driver jar file, so that it becomes available to jobs run in the notebook. Select the driver version without the AWS SDK. First, you need to download the library from here. With regards to the database driver, the library to interact with Redshift *is not* included in Hopsworks - you need to upload the driver yourself. You can even restrict it so that only certain roles within a project (like a data owner) can assume a given role. For the federated IAM role, you create a head IAM role for the cluster that enables Hopsworks to assume a potentially different IAM role in each project. For the per-cluster IAM role, you select an instance profile for your Hopsworks cluster when launching it in Hopsworks, and all jobs or notebooks will be run with the selected IAM role. In Hopsworks, there are two different ways to configure an IAM role: a per-cluster IAM role or a federated IAM role (role chaining). With IAM roles, Jobs or notebooks launched on Hopsworks do not need to explicitly authenticate with Redshift, as the HSFS library will transparently use the IAM role to acquire a temporary credential to authenticate the specified user. The second option is to configure an IAM role. The password is stored in the secret store and made available to all the members of the project. The first option is to configure a username and a password. There are two options available for authenticating with the Redshift cluster. Database port: The port of the cluster.Database name: The name of the database to query.Database endpoint: The endpoint for the database.Database driver: You can use the default JDBC Redshift Driver `42.Driver` (More on this later).Cluster identifier: The name of the cluster.Most of them are available in the properties area of your cluster in the Redshift UI. The Redshift connector requires you to specify the following properties. The first step to be able to ingest Redshift data into the feature store is to configure a storage connector. Step 1 - Configure the Redshift Storage Connector If you don't have an existing cluster, you can create one by following the AWS Documentation.

Users should also have an existing Redshift cluster. You can register for free with no credit-card and receive 300 USD of credits to get started. To follow this tutorial users should have an Hopsworks Feature Store instance running on Hopsworks. In this blog post, we show how you can configure the Redshift storage connector to ingest data, transform that data into features ( feature engineering) and save the pre-computed features in the Hopsworks Feature Store. It is provided as a managed service on AWS and it includes all the tools needed to transform data in Redshift into features that will be used when training and serving ML models.

The Hopsworks Feature Store is the leading open-source feature store for machine learning. This makes Redshift a popular source of raw data for computing features for training machine learning models. Companies use Redshift to store structured data for traditional data analytics. Amazon Redshift is a popular managed data warehouse on AWS.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed